The most significant friction point in any creative department isn’t usually the initial spark of an idea; it’s the grueling 72 hours that follow a stakeholder’s “just one small change” request. In traditional production, a single lighting adjustment or a shift in composition often triggers a cascading series of manual tasks—re-masking, re-rendering, and re-compositing. For creative operations leads, this linear progression is the primary enemy of scale.

The introduction of generative models like Nano Banana Pro has fundamentally shifted the logistics of this process. We are moving away from a world of manual asset construction and toward a reality defined by compressed, iterative loops. However, achieving genuine production velocity requires more than just access to a fast model. It requires a structural rethink of how we handle review cycles and the technical gap between a “good enough” generation and a high-resolution, deliverable asset.

The Evolution of Production Velocity in Generative Media

Production velocity is often misidentified as “volume.” It is easy to generate a hundred variations of an image in an afternoon, but if none of those images meet the technical requirements for a 4K display or a brand-compliant layout, the velocity is effectively zero. In a traditional pipeline, the bottleneck is the manual labor of creation. With the integration of Nano Banana Pro AI, the bottleneck has migrated from creation to curation.

This shift means the “rendering floor”—the minimum time required to physically produce an image—has collapsed. In its place, we have a curation ceiling where the speed of delivery is limited only by how quickly a lead can identify the “hero” asset and refine it. To maximize this, creative teams must treat the initial prompt not as a final request, but as a low-fidelity prototype.

The goal isn’t to get the perfect image on the first try. Instead, the workflow focuses on reaching a visual “lock” on composition and color theory as quickly as possible. By using high-performance models to iterate through dozens of structural variations, teams can bypass days of mood-boarding and move straight into high-fidelity refinement.

Short-Circuiting the Traditional Review Cycle

The traditional review cycle is a ping-pong match of PDFs, emails, and annotated screenshots. By the time a designer implements feedback, the stakeholder’s mental context has often shifted. Generative tools change this dynamic by turning feedback into a live, collaborative session.

In an AI-augmented environment, the role of a Creative Operations Lead transitions into that of a Workflow Architect. Instead of managing a queue of manual tasks, they manage a pipeline of prompt versioning and seed adjustments. When a stakeholder asks to “make the lighting warmer” or “adjust the depth of field,” these are no longer instructions for a multi-hour rework. They are real-time adjustments that can be visualized in seconds using the right tools.

This reduces the friction of the review process because it allows for “visual negotiation.” Rather than explaining why a certain change might not work, the lead can simply generate the variation. This immediate feedback loop often reveals that what the stakeholder asked for isn’t actually what they wanted, allowing the team to pivot instantly without wasting production hours.

Architecting the Pipeline: From Banana AI to High-Resolution Delivery

Building a repeatable asset pipeline requires a tiered approach to tool usage. You wouldn’t use a high-end cinematic camera to shoot a quick storyboard; similarly, professional workflows shouldn’t burn high-tier resources on early-stage ideation.

A production-savvy team often starts with Banana AI for high-volume, low-cost conceptualization. At this stage, the focus is on the “big three”: composition, color palette, and subject placement. Once the core concept is approved, the workflow moves into the refinement stage, where Nano Banana Pro AI is used to sharpen textures and define specific details.

The Role of Inpainting and Outpainting

One of the most common pitfalls in generative workflows is the “start over” instinct. If a generation is 90% perfect but has a localized flaw—perhaps a distorted hand or an awkward background element—inexperienced operators often scrap the entire image.

A tool-savvy pipeline utilizes inpainting to target and repair these specific areas. This maintains the approved parts of the image while addressing the errors. Outpainting follows a similar logic, allowing teams to extend a canvas to fit different aspect ratios (e.g., turning a 1:1 Instagram post into a 16:9 web banner) without losing the original’s stylistic integrity.

Bridging the K-Level Resolution Gap

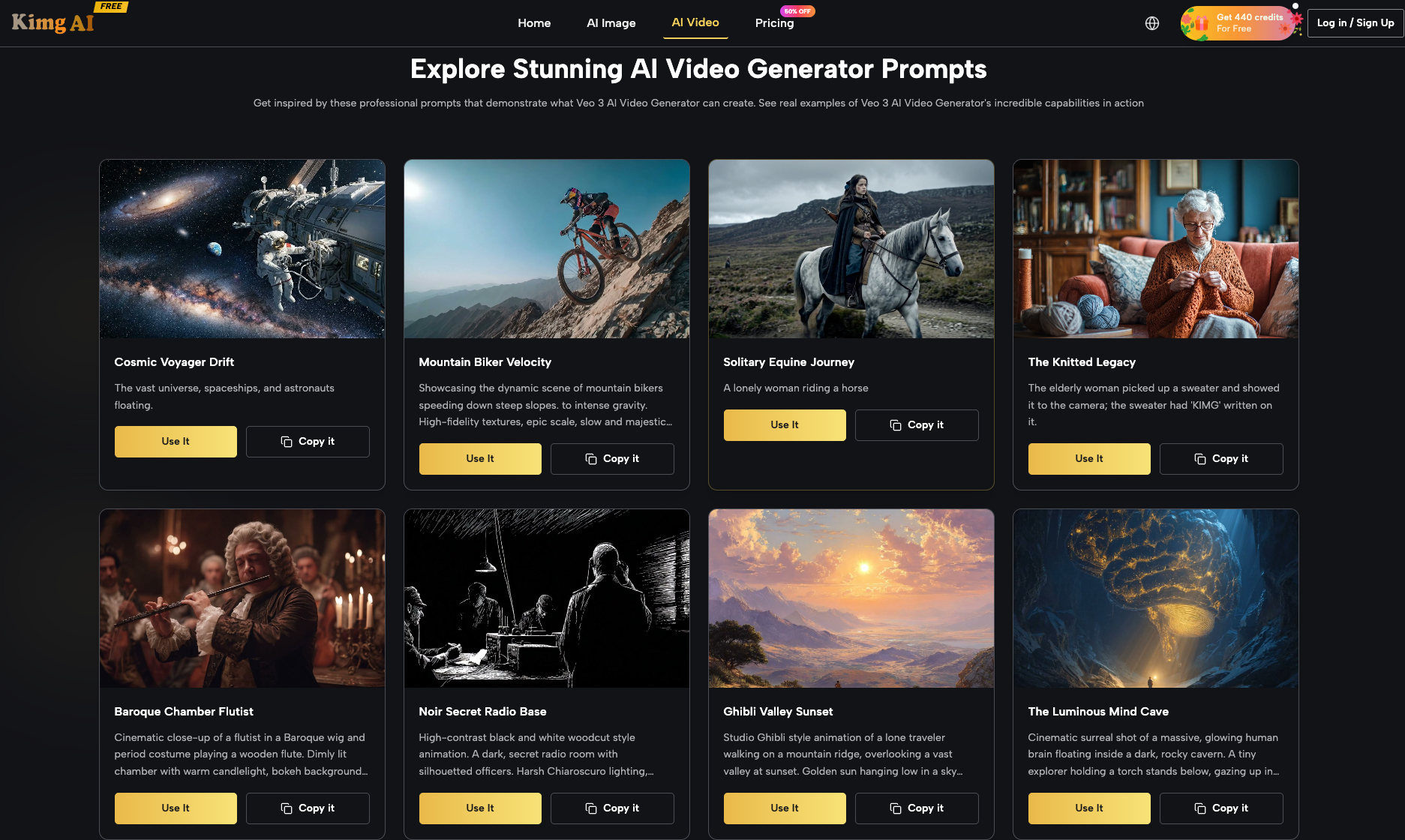

The final stage of delivery is the technical leap. Most generative models produce images at a resolution suitable for social media but insufficient for print or large-scale digital displays. This is where the “K-level” upscaling process becomes critical. Within the Kimg AI ecosystem, this isn’t just about enlarging pixels; it’s about a generative upscale that adds plausible detail to the image, ensuring that the 4K or 8K version retains the crispness of the original low-res concept.

Navigating the Ambiguity of Generative Consistency

Despite the speed of Nano Banana Pro, we must be realistic about the current limitations of the technology. The “unsolved problem” of generative media remains the challenge of perfect brand consistency across a large volume of disparate assets.

The Limitation of Hyper-Specific Typography

It is a mistake to assume that current AI models can reliably handle proprietary typography or complex text rendering without human oversight. While models are improving at “drawing” letters, they do not yet respect the nuances of brand-specific kerning or custom font weights. For assets requiring precise text placement, the AI should be used to generate the background and atmospheric elements, while the typography is handled in a traditional vector environment. Expecting a model to output a pixel-perfect, brand-compliant logo lockup is a recipe for frustration and missed deadlines.

The Uncertainty of Character and Style Drift

Another area where caution is required is in maintaining a single “character” or specific product across 50 different lighting setups and angles. While features like image fusion and seed-locking help, there is still an inherent level of “style drift” in generative outputs. Creative leads should plan for a 10-15% “manual touch” rate, where a human designer steps in to ensure that the product’s specific features—like a unique button placement or a specific shade of brand-protected blue—remain consistent across the campaign.

Measuring the True ROI of AI-First Creative Ops

When evaluating the impact of Nano Banana Pro on the bottom line, the metrics must shift from “cost per asset” to “time to market.” In a traditional setup, a large-scale campaign might take six weeks from brief to delivery. An AI-integrated pipeline can cut that to two weeks, not by replacing the creative staff, but by removing the mechanical obstacles that slow them down.

The ROI isn’t just found in the reduction of hours; it’s found in the ability to test more ideas. Because the cost of failure (a “bad” generation) is essentially zero, teams can explore more daring visual directions that they previously would have ignored due to budget constraints.

Ultimately, the success of these tools depends on the operator. A tool like Banana AI provides the engine, but the Creative Operations Lead provides the roadmap. By integrating these models into a structured pipeline of rapid prototyping, targeted inpainting, and high-resolution upscaling, teams can finally overcome the iteration bottleneck that has defined the creative industry for decades. The goal is a workflow that is as fluid as the imagination, moving from a prompt to a K-level asset with minimal friction and maximum control.

Lynn Martelli is an editor at Readability. She received her MFA in Creative Writing from Antioch University and has worked as an editor for over 10 years. Lynn has edited a wide variety of books, including fiction, non-fiction, memoirs, and more. In her free time, Lynn enjoys reading, writing, and spending time with her family and friends.